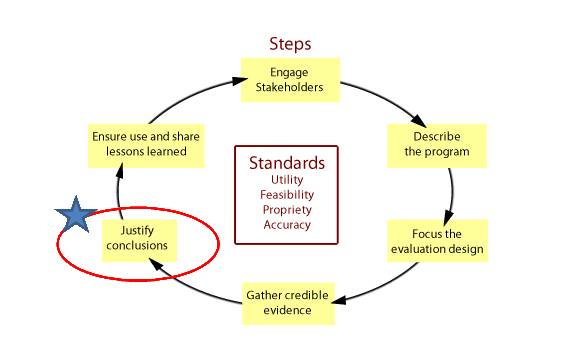

Justify Conclusions

Once your data have been collected, it is time to begin to analyze the data and come to conclusions regarding the program based on your research questions.

The analyses performed during this step depend on your evaluation questions as well the type of data you have collected. In this step, focus on both organizing and processing the data properly and ensuring presentation of the results will be easily understandable to your various audiences.

If you have used multiple research methods (for example, if you have both quantitative and qualitative data), you can analyze each individually and then look to compare and synthesize results. Having multiple sources and types of information in your evaluation may help to confirm or provide greater nuances to your conclusions.

For quantitative analyses, consulting the biostatistics module may be helpful.

What if your evaluation has no effect, or is not meeting its goals?

In this situation your analyses and conclusions should focus around why. Perhaps a process evaluation would be helpful at this point to see if the program was implemented as intended.

It could also be that your evaluation was not powered sufficiently to find and effect. This is Type II Error.

If all other explanations have been explored, then it is possible that the null hypothesis is true, and that the program has no effect. Though this can be disappointing for stakeholders, it can be an opportunity to explore what could improve the program or if there are other programs that may be more impactful. Again, a process evaluation might be very helpful here to explore the different aspects of the program and how it was implemented in detail.

If you do find an effect, how do you decide if this effect is meaningful?

How do you decide whether a program has been successful?

Choose program standards. The CDC recommends articulating program standards to help judge program performance. These should be agreed upon with stakeholders, and may depend on the type and scope of the program. Some examples to consider would be (from the CDC guide for health program evaluation:

- Needs of participants

- Community values, expectations, and norms

- Program mission and objectives

- Program protocols and procedures

- Performance by similar programs

- Performance by a control or comparison group

- Resource efficiency

- Mandates, policies, regulations, and laws

- Judgments of participants, experts, and funders

- Institutional goals

- Social equity

- Human rights"

Whatever performance indicator(s) you use to define a program's success, consider as you have at other points in the process the program's developmental stage, stakeholder expectations and the overall logic model of the program.

Defining Success for Health Bucks

For the following questions consider the Health Bucks program.

- If you were the evaluator of the Health Bucks program, what indicators would you consider as program standard(s)?

- Using this program standard, how would you define success for the Health Bucks initiative?

Interpretations and Judgments

Compare your findings to your selected standard(s) to explore the impacts of your program. From this interpretation, which can utilize multiple program standards, you form judgments regarding how well the program met these goals.

The CDC's Introduction to Program Evaluation for Comprehensive Tobacco Control Programs provides some helpful tips to consider when interpreting your findings, such as:

- Interpret evaluation results with the goals of your program in mind.

- Keep your audience in mind when preparing the report. What do they need and want to know?

- Consider the limitations of the evaluation,such as:

- Possible biases

- Validity of results

- Reliability of results

- Are there alternative explanations for your results?

- How do your results compare with those of similar programs?

- Have the different data collection methods used to measure your progress shown similar results?

- Are your results consistent with theories supported by previous research?

- Are your results similar to what you expected? If not, why do you think they may be different?

Justify Conclusions Checklist

Source - CDC manual for Health Program

- Check data for errors

- Consider issues of context when interpreting data

- Assess results against available literature and results of similar programs

- If multiple methods have been employed, compare different methods for consistency in findings

- Consider alternative explanations

- Use existing standards (e.g., Healthy People 2010 objectives) as a starting point for comparison

- Compare program outcomes with those of previous years

- Compare actual with intended outcomes.

- Document potential biases

- Examine the limitations of the evaluation